SC2020 High Performance Computing Showcase

HPC for Students

Explore how high-performance computing empowers students through research, internships, and outreach programs.

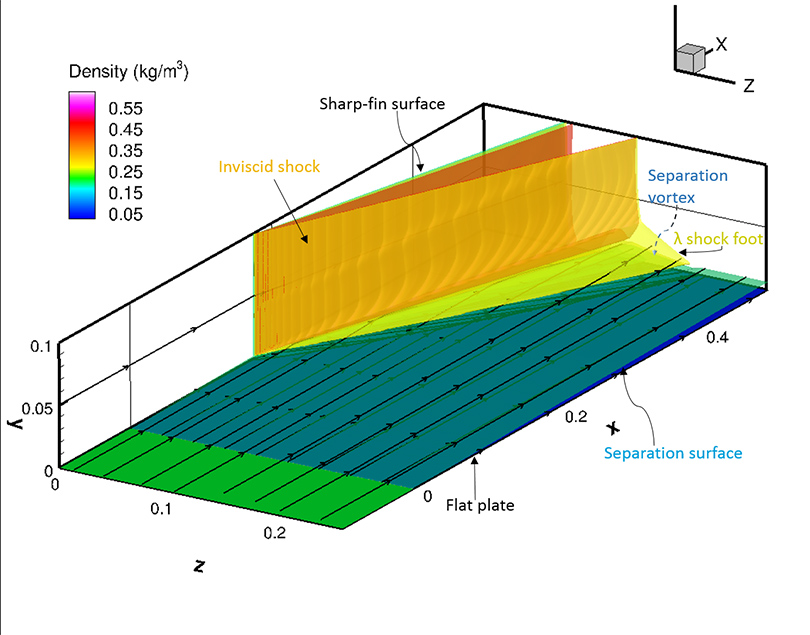

Space Exploration

Discover how supercomputing accelerates breakthroughs in space science, engineering, and exploration.

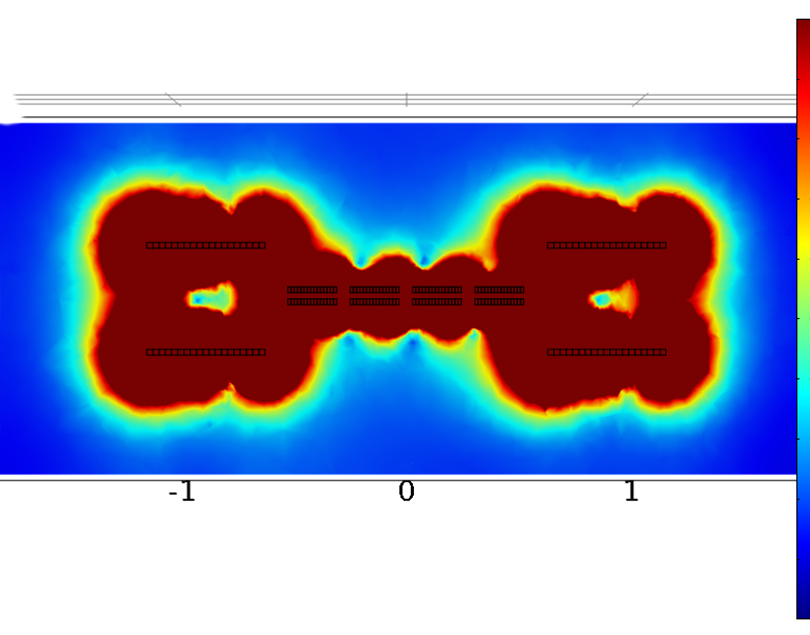

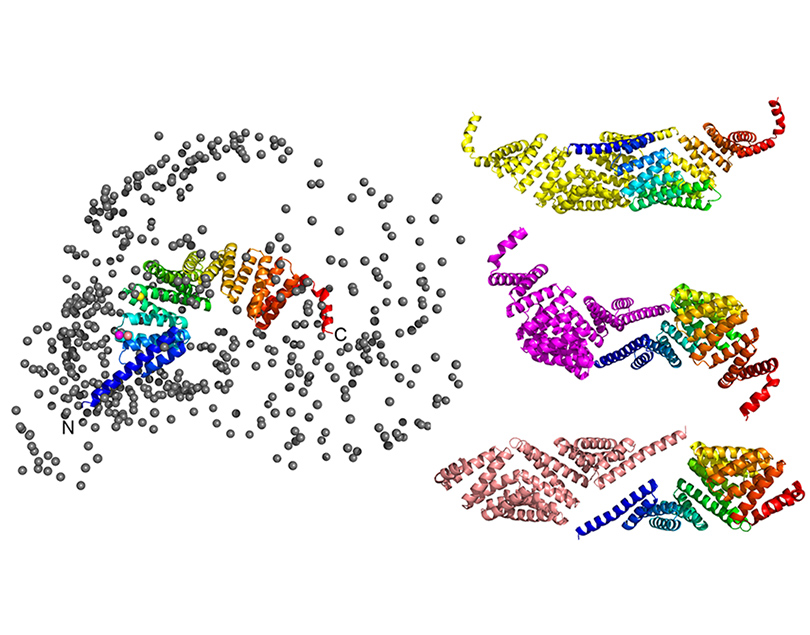

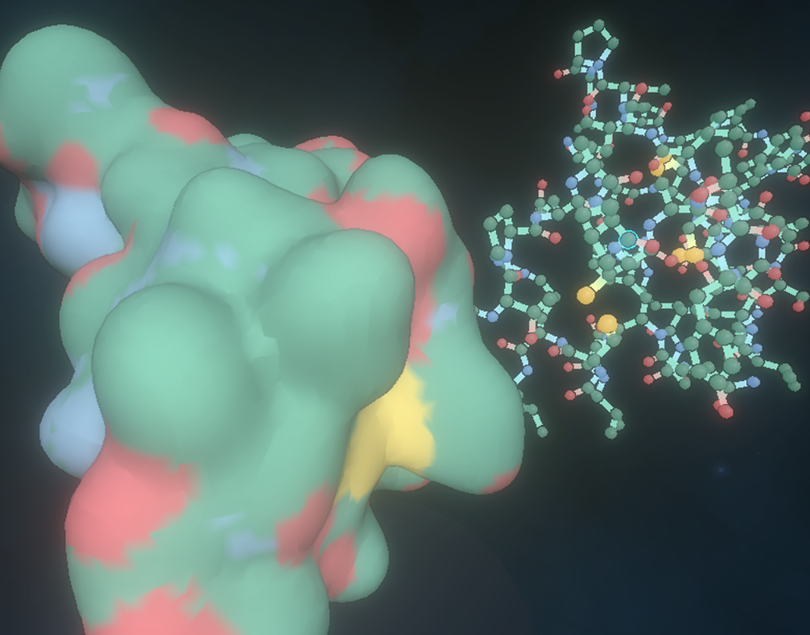

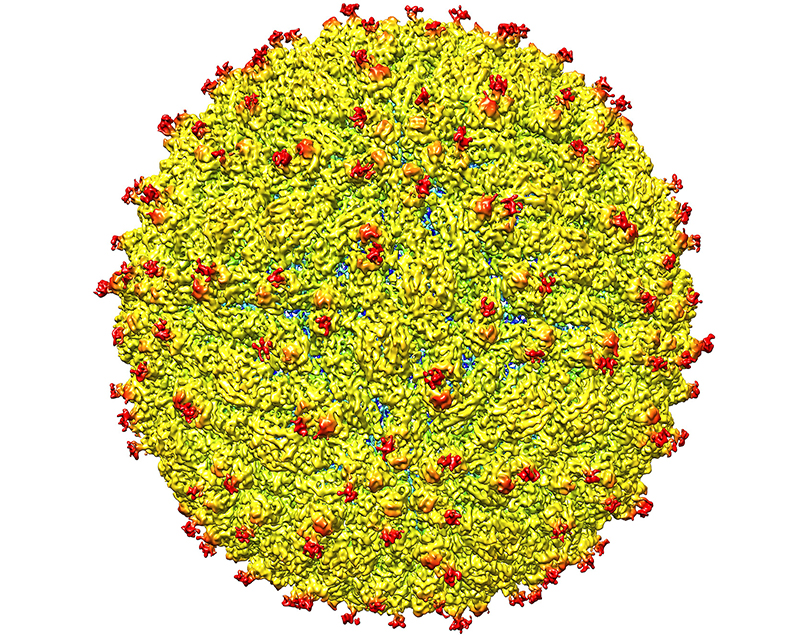

Health and Longevity

See how advanced computing is used to model proteins, discover drugs, and improve global health.

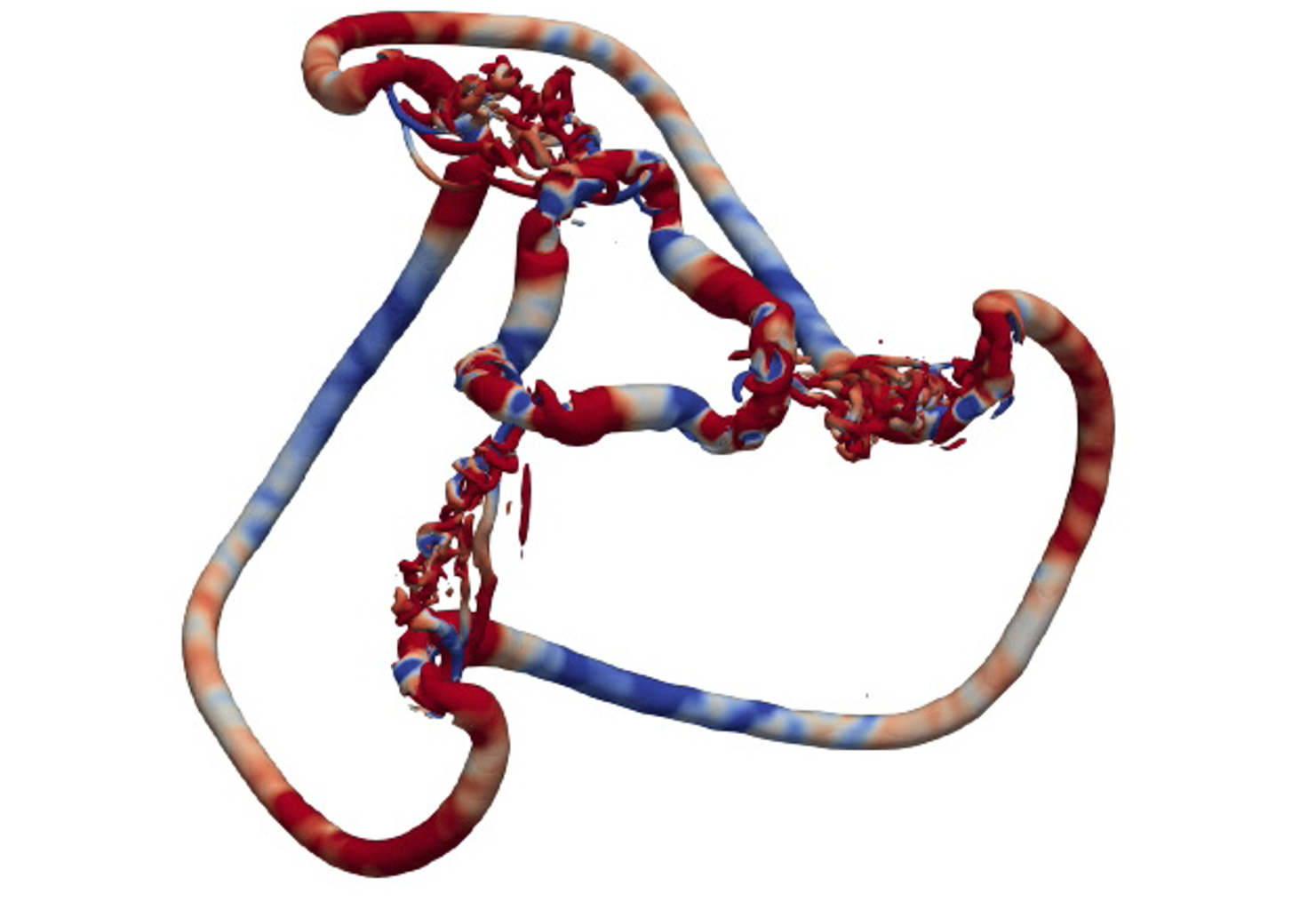

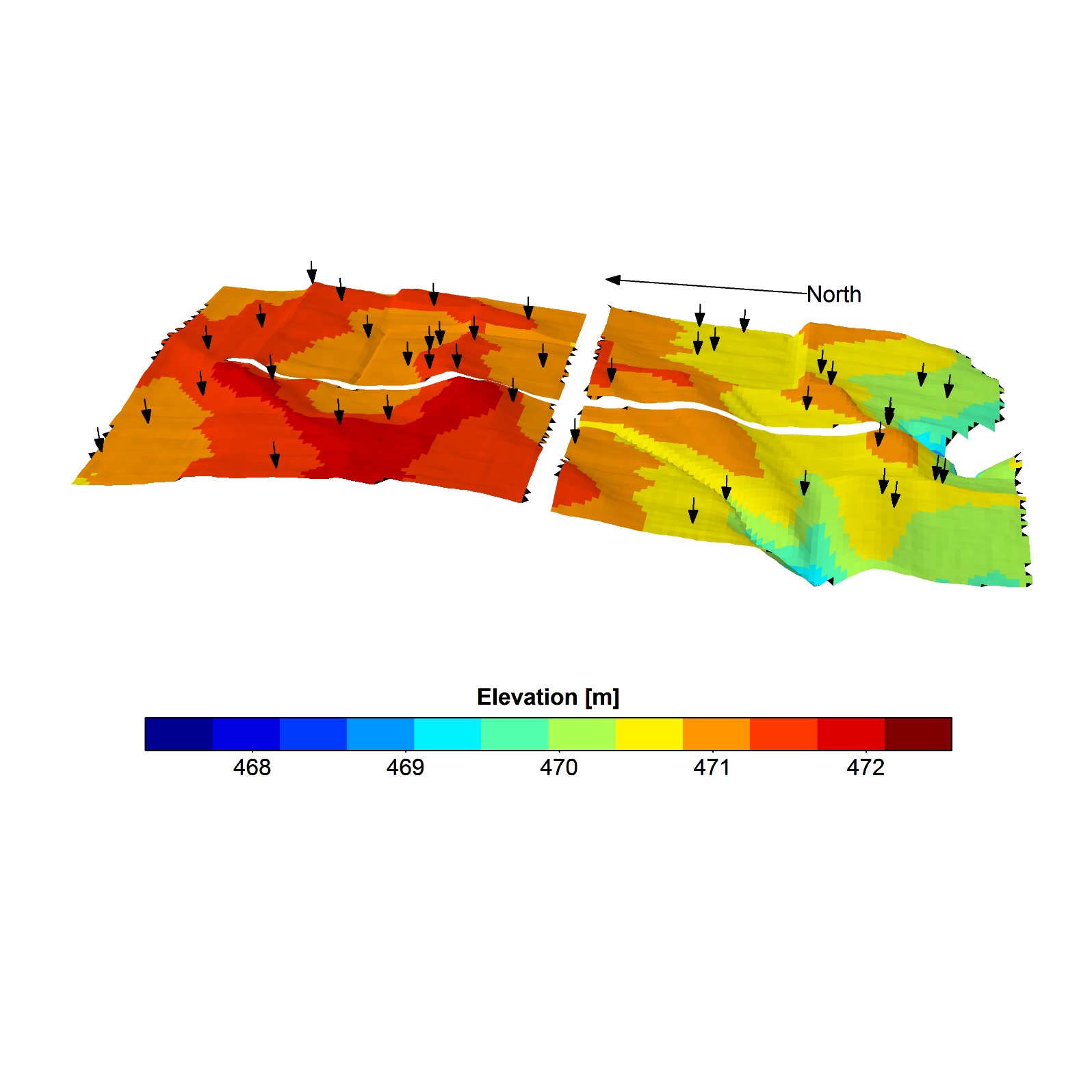

Sustainable Economy and Planet

Learn how HPC supports sustainability, agriculture, and environmental research for a better future.

Artificial Intelligence

Explore the intersection of AI and supercomputing, powering innovation across disciplines.